VCL or "Varnish Configuration Language" is an absolutely brilliant idea, which I may have said once or twice already, but it can be a bit much to wrap your head around the first time you approach it, and the built-in VCL notion is an extra curveball. So today, we sit down and tackle this.

We'll avoid examples, long descriptions and overly complex details to focus on the high-level and almost philosophical concepts governing the VCL. Let's do this!

Y U NO use YAML?

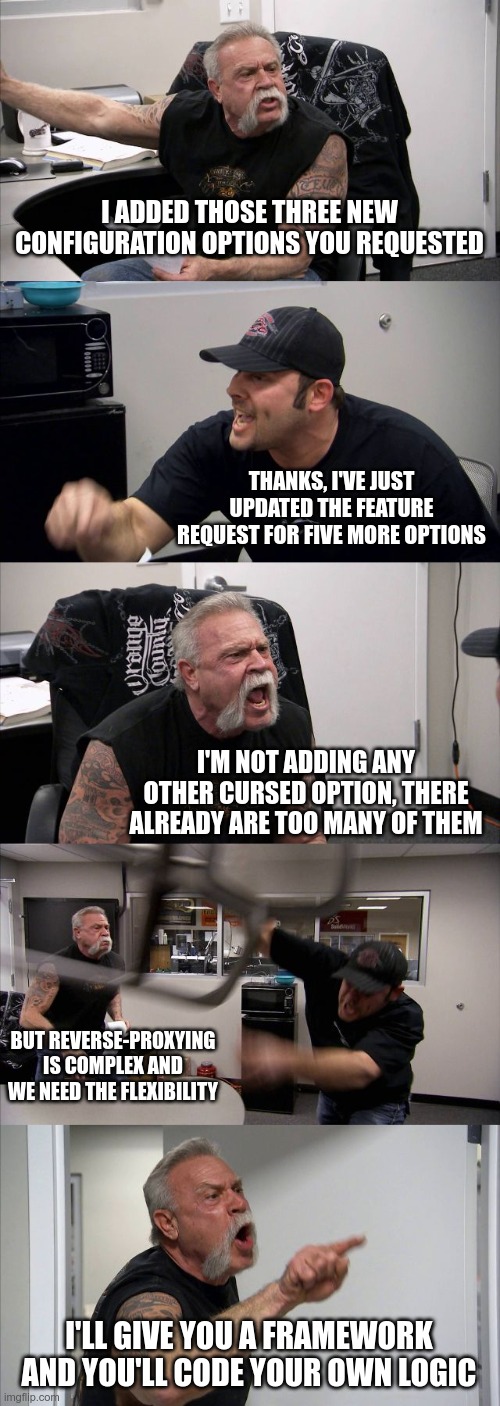

VCL is the perfect application of the old adage "teach a man to fish and you will feed him for a lifetime". The genesis of VCL is pretty simple and went something like this while hacking on Varnish (artist rendition, adjusted for extra drama):

And that's what started the VCL: a user hungry for features and a developer ready to give them more agency.

To answer the section title: YAML, or any other declarative language for that matter, only allows for setting options that the developers planned for. I usually liken this to piloting a train: you have control over the speed, and decide when to stop, but you are still stuck on the same rails. Varnish, on the other hand, gives you a good old car that you can tune to hit any road you want; you'll need to know where you are going, but the freedom is exhilarating.

And to hammer that point home: it's very easy to build a YAML schema that will generate a VCL configuration (and a lot of people do!), but the other way around is nigh impossible.

The true nature of VCL

You may have heard that VCL in transpiled into C, and then compiled into a shared library, allowing for blazing speed and atomic, faultless reloads. It's all true, but it's basically a side-effect of the VCL nature. In truth, VCL is a plugin system much more than it is a configuration system.

Indeed, Varnish has a very good grasp of HTTP so it can handle it on its own with some sensible defaults, but because it knows that no hard-coded logic will ever cover every single use case, it bails out and just provides hooks for you to code your own logic.

For example, after receiving a request, Varnish will parse it, identify the URL, the headers, and will then reach out to you and ask "what do I do with this?" so you can decide the routing, access control, cacheability, etc. for each and every single request.

It may sound scary at first, but remember, the freedom is exhilarating, and you will love it in no time.

Hook me in

At each important step of the request processing, Varnish will give you control, and you can do two things with it:

- read/write the request and response object

- decide what the next processing step should be

If you are already familiar with a programming language, you won't be lost, but if you are not, it'll still be easy, the VCL syntax is very simple! Have a look at this example:

# this is a comment

# vcl_recv is called as soon as a user request is received

# in it, we have access to the req object

sub vcl_recv {

# check the URL, nobody should access the /forbidden/ folder,

# if someone tries, pretend to not find the page (404)

if (req.url ~ "^/forbidden/") {

# by returning, we stop processing the request ourselves

# and tell varnish what it should do next

return (synth(40));

}

# if there is any Authorization header, disable caching, and just "pass"

# the request to the origin

if (req.http.authorization) {

return (pass);

}

# otherwise, add a header to tell the origin we'll cache

set req.http.cacheable = "absolutely!";

}

If that is readable, you are already there! It's just a matter of knowing which subroutine is called at which step, which objects are available in each subroutine, and a couple of extra keywords. And it turns out my colleague Thijs just wrote a book about this!

Do as you're (not) told

If you check the VCL snippet above, you'll notice that in the cacheable case, we don't bother returning anything, so how does Varnish know the next processing step then? Surely it will have a default value?

Well, yes and no! But to explain this quirkiness, I need to tell you something about VCL first. You remember I told you it's a plugin system, right? Well, you can implement multiple hooks for the same step, for example:

# we'll need the standard module to push some logs

import std;

sub vcl_recv {

std.log("first")

}

sub vcl_recv {

std.log("second");

if (req.http.cookie) {

return (pass)

}

}

sub vcl_recv {

std.log("third");

}

It's of course a bit of a silly example, but it illustrates the point very well. If you check the logs of a cookie-less request, you will see this:

- VCL_Log first

- VCL_Log second

- VCL_Log third

So each vcl_recv was executed in the order it is declared, which is pretty nice because you can split the logic into multiple blocks and even multiple files, and they will be implicitly called. Nice.

But there's more! If one of the subroutines returns, it will prevent the subsequent subroutines from running, which makes sense since Varnish now knows the next processing step and doesn't need to run the other routine to continue. This is why a request with a cookie would produce this log:

- VCL_Log first

- VCL_Log second

So far, so good, but what does this have to do with that there-is-a-default-return-but-not-really thing? Well, Varnish plays a little trick on us when loading your configuration; it automatically slaps this file on the end of your file. There's no default value per se, but if you, as a user, haven't provided a return value, then the built-in logic will run.

The implication is pretty fun: your VCL can be as short as this:

# 4.0 is the VCL syntax version, not the Varnish version

vcl 4.0;

backend default { .host = "1.2.3.4"; };

and you'd just follow the built-in logic, and you can overload any of the subroutines by simply adding it to your file.

The power of genericity

The whole VCL concept has an amazing consequence for both users and developers: modules/extensions/plugins are conceptually simpler, easier to use and more versatile.

In Varnish, extensions are called "VMODs" (for VCL Modules), and keeping in line with previous logic, they are not configuration extensions but language extensions, making them a lot more powerful.

Let's have a completely random and non-opinionated example with mod_rewrite and vmod_rewrite.

mod_rewrite is a very powerful Apache module that allows you to transform the URL of a request, and possibly send an HTTP redirect to the user. It also has an inordinate amount of options because the module itself needs to hook itself into the core logic of Apache, and it also needs to understand HTTP concepts, such as the host header, the path or the URL, and it also needs to be able to trigger HTTP redirects on it own.

On the other hand, vmod_rewrite is blind to these and it only manipulates strings, letting the VCL be the glue to perform the more generic actions. This allows vmod_rewrite to be both more generic and more powerful to cover the same basic use cases very simply, but also create an unrelated access control scheme unsupported by the Apache module, and even some wackier ideas that the developers didn't plan originally.

Power to the user

As a developer, I'm obviously grateful to be able to code my configuration rather than being constrained by whatever was hard-coded in the software. With platforms moving faster and faster, the need for versatility is now greater than ever, and Varnish offers the power of a blazingly fast and stable reverse-proxy with the customizability (is that word? it should be!) of an home-grown project, without the boilerplate.

As announced, we talked more about the nature of VCL and stayed away from practical details, so this looks like the perfect opportunity for you to start diving into the technical aspects with the excellent Varnish book available here 👇