Yves Hwang

Latest Articles

- Yves Hwang

- October 24, 2014

Web traffic analysis software is synonymous with bouncing traffic and user data to a third-party vendor or trawling...

- Yves Hwang

- March 25, 2014

Managing multiple Varnish instances and their respective VCL is made significantly easier with Varnish Administration...

- Yves Hwang

- February 11, 2014

Typically Varnish Cache sits in front of your application servers, be it in the public or private clouds. The...

- Yves Hwang

- September 13, 2013

The Super Fast Purger was conceived as a plugin for the Varnish Administration Console. The current implementation is...

- Yves Hwang

- March 13, 2013

Varnish Administration Console (VAC) is broken up into two major components. Its Management UI, and a RESTful API, with...

- Yves Hwang

- December 6, 2012

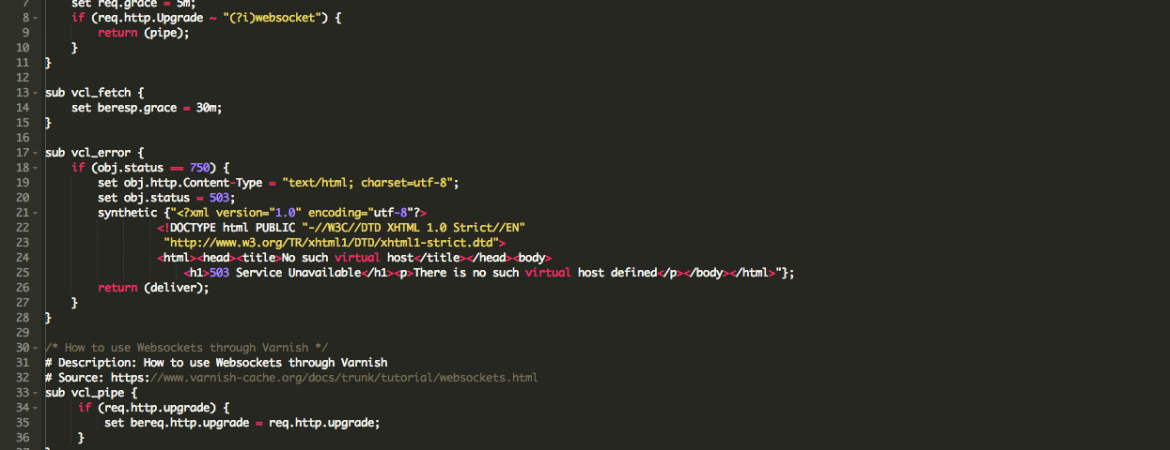

Whilst Varnish is the go-to product for high performance and high availability caching, we often overlook the...

SUBSCRIBE TO OUR BLOG

SEARCH OUR BLOG

Explore articles from Varnish experts on web performance, advanced caching techniques, CDN optimization and more, plus all the latest tips and insights for enhancing your content delivery operations.