Francisco Velazquez

Latest Articles

VCL

MSE

TLS/SSL

debugging Varnish

top ten Varnish questions

top ten need to know about varnish

cache persistence

- Francisco Velazquez

- September 25, 2017

Whether we meet seasoned or novice Varnish users of the caching software we all love, there are a number of questions...

- Francisco Velazquez

- July 25, 2017

Parallel ESI is a performance-enhanced version of open source ESI. Parallel ESI issues all the ESI includes in...

- Francisco Velazquez

- January 7, 2016

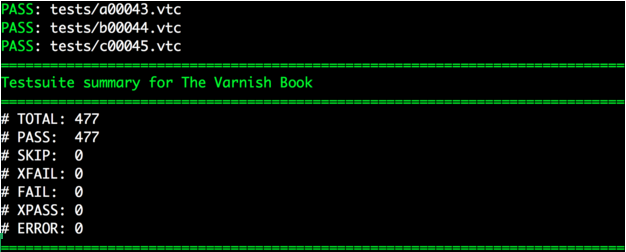

Have you used varnishtest? Maybe not, but we’ve just made it a lot easier for you to adopt and put into practice!

- Francisco Velazquez

- October 26, 2015

Varnish has an ecosystem for third-party modules – Varnish Cache Modules (VMODs). A VMOD is a shared library that can...

- Francisco Velazquez

- July 22, 2015

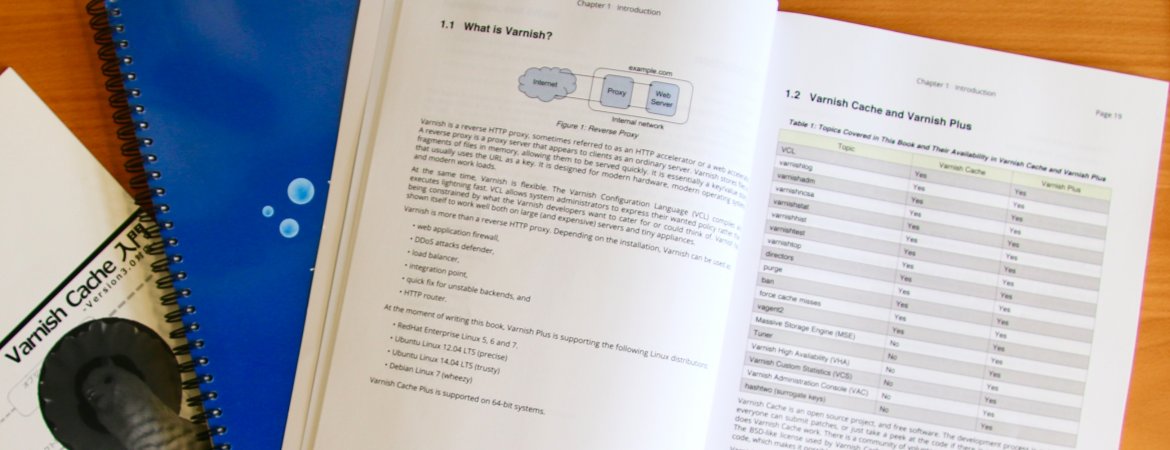

It’s finally here. The latest edition of the Varnish Book for Varnish 4 and Varnish Plus. This edition is a major...

SUBSCRIBE TO OUR BLOG

SEARCH OUR BLOG

Explore articles from Varnish experts on web performance, advanced caching techniques, CDN optimization and more, plus all the latest tips and insights for enhancing your content delivery operations.